I built a half-baked prediction markets app to study signup fraud. 650 accounts on one laptop later.

12 min read

Simul Sarker

CEO of DataCops

Last Updated

May 7, 2026

I'm Simul. I'm launching DataCops today.

It's first-party trust infrastructure for signups and conversions a CNAME that runs on your own subdomain, replaces a stack of analytics, consent, and bot detection vendors, and gives you the identity context for every visitor and every signup. Three years bootstrapped from Lisbon. UK incorporated. Today is the public launch.

Instead of doing the standard launch post (here's what we built, here are the features, here's the pricing), I want to share the actual research that shaped the product. Specifically how I figured out that signup fraud is not what I thought it was, by deliberately letting it happen on a real product for a month and watching.

The 650-account guy is the punchline. Stay until then.

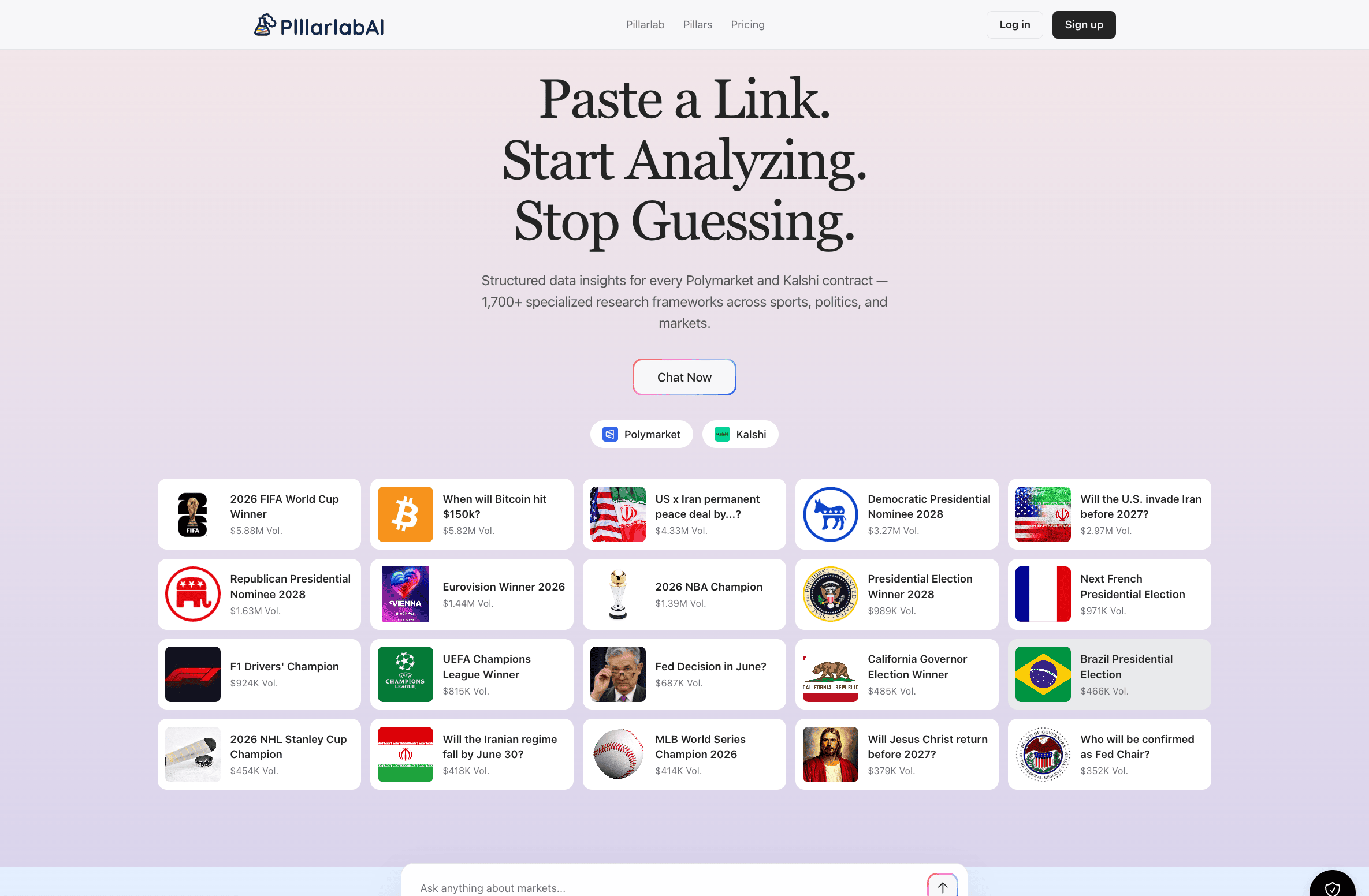

The setup: PillarlabAI

I needed real adversarial signup data. Not vendor white papers. Not synthetic test cases. Real humans doing real fraud against a real signup form, while I watched.

So I built PillarlabAI. An AI research tool for prediction markets. 5 days of vibe-coding the first version, then iterated. React frontend, OpenAI calls behind a thin auth layer, a research dashboard that pulls live data from Polymarket and Kalshi and runs analytical pillars across them.

It is a real product. Free tier with 50 credits on signup, paid tiers at $29, $99, and $349 per month, Stripe integration, annual plans with a 17% discount, the whole structure. I was not pretending. I wanted real people to use it. Some of them have. The product works.

But the part I cared about for this experiment was the funnel itself. The signup form. The free-tier credits. The audience.

If you have spent any time in crypto or prediction markets you know what that audience looks like. Sharp, manipulative, allergic to paying for anything, and roughly 40% of them running automation scripts as a hobby. There is no serious business plan around this niche. Polymarket and Kalshi are interesting. Everything orbiting them is mostly chaos.

This was perfect for a research instrument. Real product, real free tier, real audience that includes adversaries. The fraud would arrive on its own, through the same channels real users came in.

Which is exactly what I wanted to study.

A note on distribution

I should mention how I got Pillarlab in front of an audience, because this matters for the data quality.

I run 17 Reddit communities. About 38K mod karma over the last 4 years. Last 12 months across those communities, I've pushed somewhere around 9 million organic impressions. I know how to get a post to the front page of a niche subreddit without breaking the rules and without getting banned. I know which mods are picky, which titles trigger the spam filter, which posting times perform on which subs, and roughly how Reddit's recommendation algorithm weights early engagement.

When I posted Pillarlab, I did it across the prediction markets and crypto subs I had earned standing in. No paid ads. No outreach. No Product Hunt. Just operator-shaped posts written for each community, dropped in at the right times.

The traffic that hit Pillarlab was, by the numbers, equivalent to a well-funded launch campaign. Pure organic Reddit. 3,000 signups in 4 weeks for a 5-day vibe-coded prediction markets product with no marketing budget.

I mention this because the fraud ratio in this experiment is not artificially high. I did not buy bot traffic. I did not seed click farms. I did not post on sketchy crypto Telegrams that attract pure scammers. I did exactly what a smart founder running organic distribution does. The fraud arrived on its own, through the same channels real users came in.

Which is the entire point. This is what your funnel actually looks like when you launch on Reddit, X, or any other organic distribution channel. You just usually do not have the instrumentation to see it.

On the signup form, I put Google reCAPTCHA. Just CAPTCHA.

That was it. Standard reCAPTCHA implementation. Standard threshold. The kind of setup a normal small SaaS team ships on day one and never thinks about again.

I deliberately did not run DataCops on the signup form yet. I wanted to see what CAPTCHA alone would catch on its own. I wanted to know how good CAPTCHA actually is in 2026 against a real adversarial audience. Vendor pages tell you it's a rigorous gate. Reddit threads tell you it's broken. I wanted my own data.

So I left it as the only line of defense and watched.

Weeks 1 through 4: watching CAPTCHA do nothing

The signups started rolling in.

The dashboard looked great on paper. By week 1, a few hundred signups. By week 2, over a thousand. By week 4 I was sitting at over 3,000 signups for a 5-day vibe-coded prediction markets product that I had launched only on Reddit.

That number alone should have told me something. A real launch with paid traffic and a polished landing page does not always get 3,000 signups in 4 weeks for a niche product. A vibe-coded build with three Reddit posts behind it does not, unless something is very off.

But on paper everything looked fine. CAPTCHA scores looked clean. Almost everything was returning high "human" confidence. Nothing was tripping any threshold.

The credits balance was draining 6 to 8 times faster than the active user count justified. Someone was burning through 50-credit free tiers and disappearing. Coming back. Disappearing again.

CAPTCHA was telling me everything was fine. The credits dashboard was telling me everything was on fire.

I had my answer about CAPTCHA: it was useless against real adversaries. Time to flip the switch.

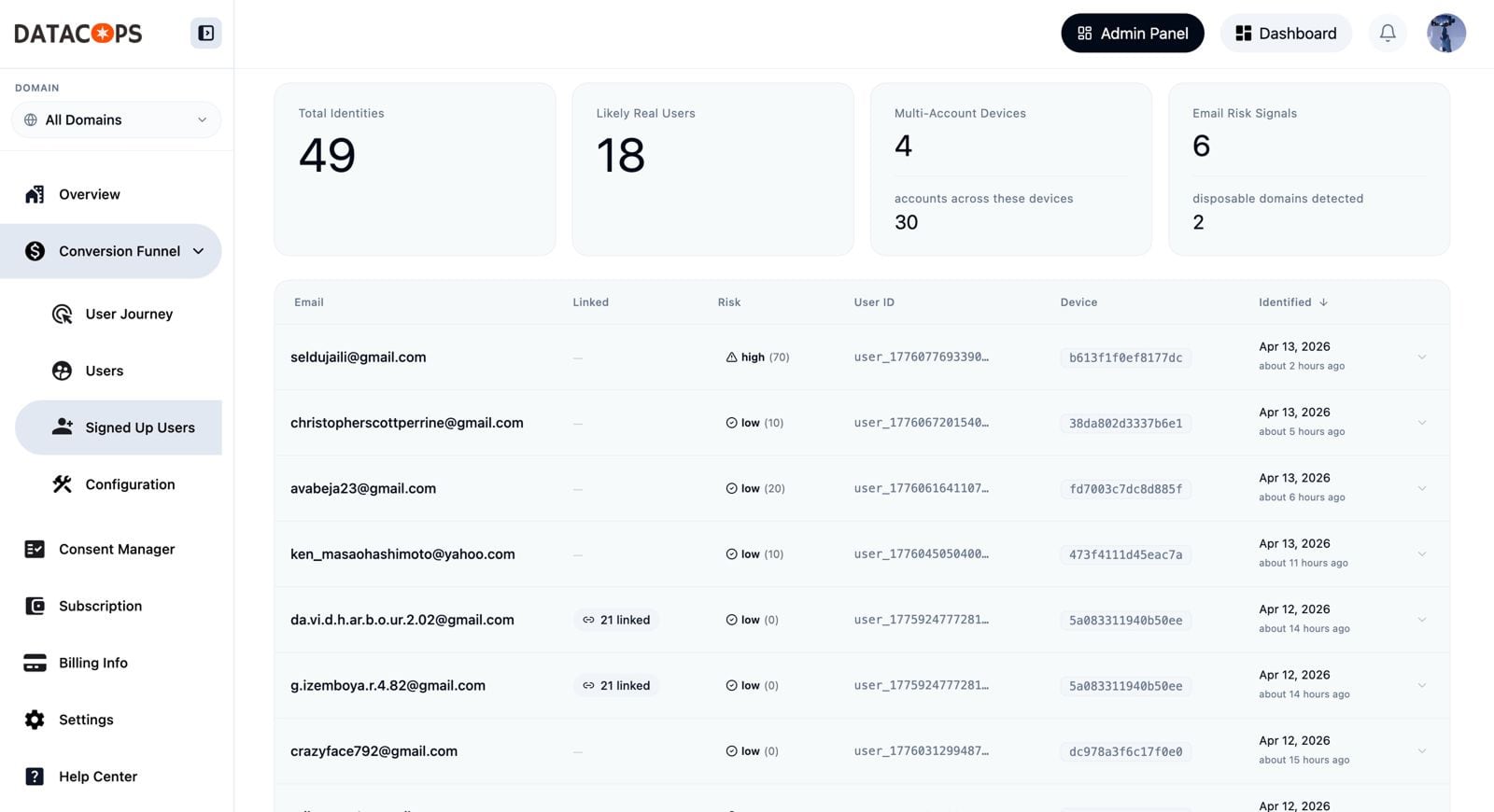

Turning on DataCops

I added the DataCops script to Pillarlab and started a bulk scan against every signup that was already in the database. The scan checks the email domain reputation and the IP class. New signups would be checked in real time going forward, including device fingerprinting.

I expected maybe 30% of the 3,000 to come back as suspect.

The actual number was higher than that. A lot higher.

Of the ~3,000 signups, only around 730 came back as real humans. The remaining ~2,300 hit one or more critical signals: throwaway domain, datacenter IP, linked to other accounts on the same device once fingerprinting kicked in, or some combination of all three.

Roughly 77% of my "users" were fraud. CAPTCHA had passed every single one of them.

I sat there reading my own dashboard like I was watching a wildlife documentary. I had built it to surface exactly this pattern. I had never actually seen it operate at full speed against real adversaries.

It was hypnotic.

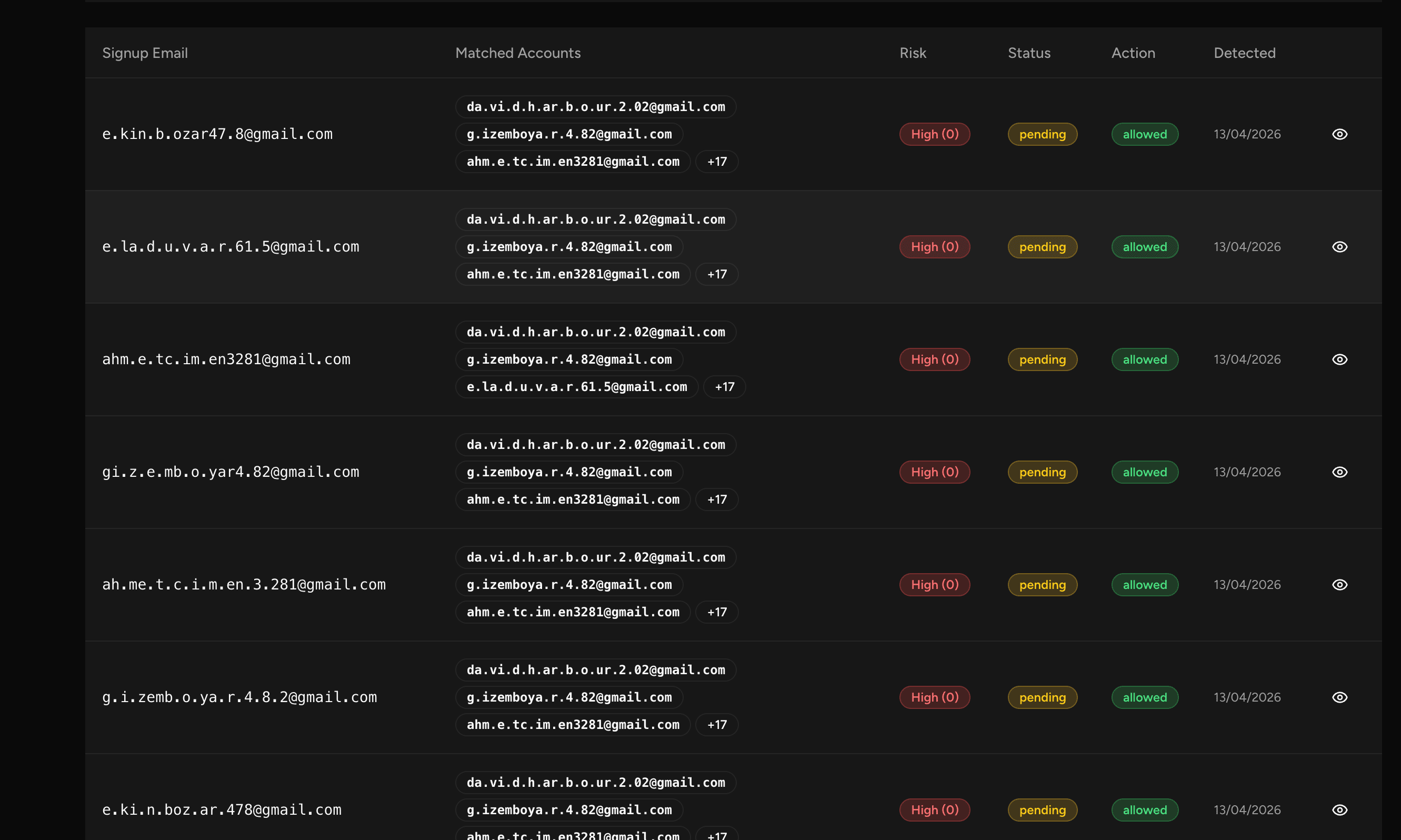

The 650-account guy

I let DataCops keep running. Real-time signups continued, now under fingerprinting. Within about a week the total reached ~4,500 signups. The breakdown stayed roughly proportional: real humans hovering near 730, fraud climbing.

Once fingerprinting had been live for a few days, I sorted my fingerprint database by related_email_count descending. The top result hit me in the face.

One device fingerprint had 650 accounts attached to it.

One person. One laptop. Six hundred and fifty free trial signups in roughly a week.

I sat there staring at it for probably 10 minutes. I had to verify it three different ways because I assumed it was a bug. It wasn't. Same canvas hash, same WebGL renderer, same audio DAC, same font list, same screen resolution. Across 650 distinct signups using rotating throwaway email domains.

No bot. No automation script that I could detect from behavioral signals. The form completion times were variable in a way that scripts usually aren't. This was a human. One human. Who had decided that the optimal use of his week was to manually create 650 accounts on Pillarlab to farm 32,500 free AI credits.

That's just the post-install window. I have no fingerprint data on the original 3,000 CAPTCHA-only period. He was almost certainly active during that period too. The real number is higher. I just can't prove how much higher.

CAPTCHA had passed every single one of his 650 attempts.

I do not know what he was doing with the credits. I assume he was reselling them. Or running them through some other tool. Or maybe he just enjoyed it. The crypto-adjacent ecosystem has incentive structures that I will never fully understand.

What the rest of the fraud looked like

Once I knew what to look for, the patterns were embarrassingly obvious. The fraud broke into rough categories:

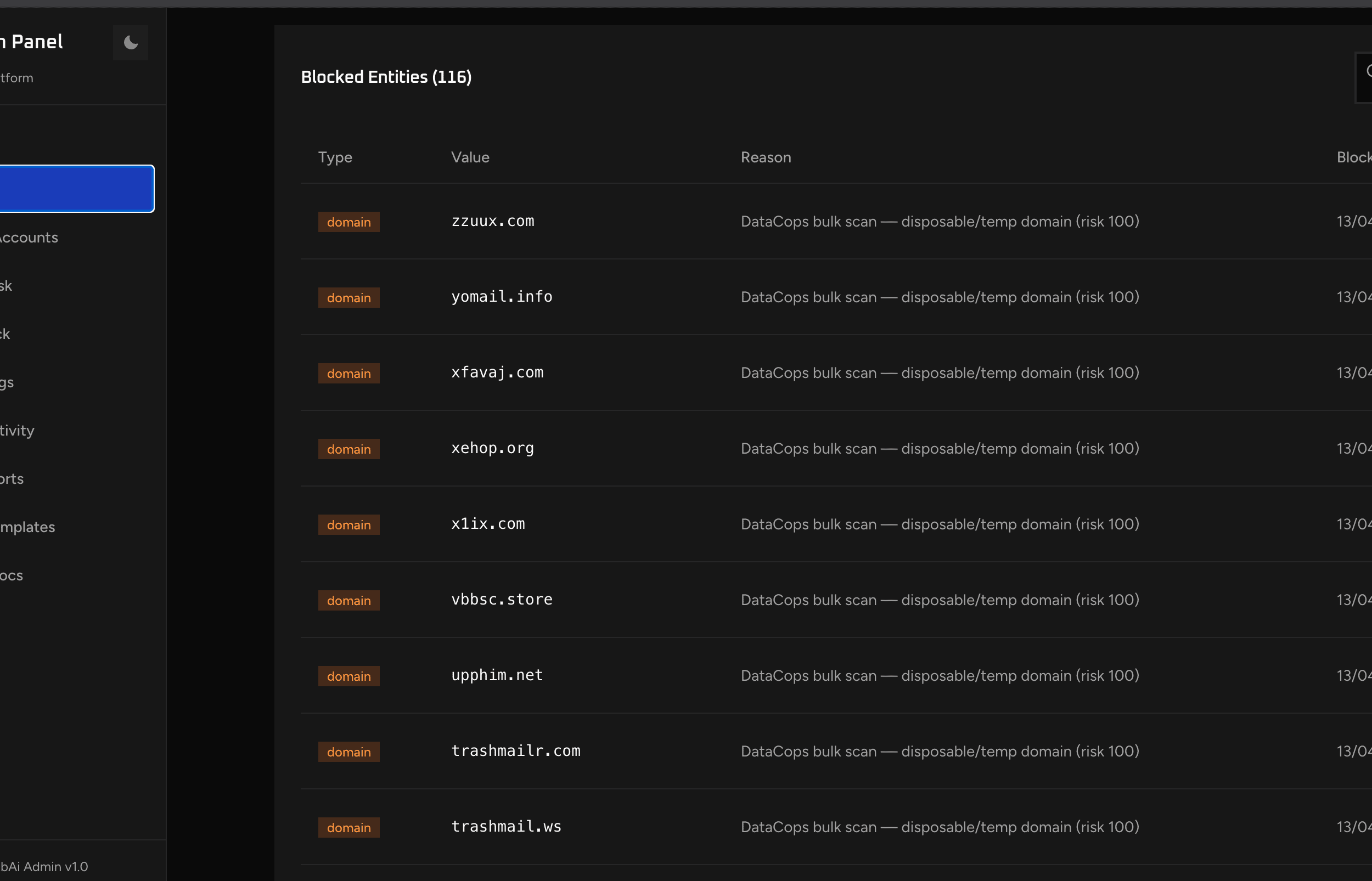

The throwaway domain farmers. Roughly 60% of the fraud signups. These were people who had registered their own custom throwaway email domains specifically to bypass standard disposable email blocklists. I saw zzuux.com, yomail.info, xfavaj.com, xehop.org, x1ix.com, vbbsc.store, upphim.net, and dozens more. None of them existed as real websites. Most had MX records that pointed to budget email forwarding services. Each domain had between 8 and 60 accounts on it.

These domains were not on Mailinator. Not on any public disposable list. They were too new. Some had been registered the same week the accounts were created. Whoever was running this had infrastructure. Cheap infrastructure, but real infrastructure.

The mid-tier farmers. Maybe 20%. Same playbook as the 650 guy, but smaller. One device with 21 accounts. Another with 47. Another with 100. Each one a single human running the same loop with slightly less commitment.

The IP-rotators. Around 15%. These had clean throwaway emails (Gmail, ProtonMail, real-looking) but the IPs all came from datacenter ranges or VPN exit nodes. Frankfurt, Singapore, Virginia. The kind of IP profile no real prediction-markets user has. These felt automated, but the form completion behavior was inconsistent. I think these were humans behind VPNs, maybe in regions where VPN use is mandatory.

The actual bots. The remaining 5%. Headless Chrome signatures, Puppeteer detection triggered, form completed in under 1.2 seconds, suspicious navigator properties. These were the easiest to spot and the smallest fraction of the problem. Almost a rounding error.

The math is the part that broke me a little. 95% of the fraud was humans. The actual bots, the thing I had spent three years building detection for, were the smallest threat. The real attackers were people sitting at laptops, possibly being paid pennies per account, possibly just bored, definitely undeterred by CAPTCHA.

What this taught me

I had been thinking about signup fraud wrong before this experiment.

Bot detection is not the answer. The bots are 5% of the problem.

CAPTCHA is not the answer. CAPTCHA was built in 1997 to stop scripted crawlers. It was never designed to stop a human who has decided to cheat. And the human who has decided to cheat is the actual adversary now.

Email validation is not the answer. The throwaway domains pass every standard email check. They have valid MX records. They accept mail. They look legitimate to every off-the-shelf email validator on the market.

Rate limiting is not the answer. The 650-account guy was not in a rush. He spaced out his signups. The IP-rotators came from different IPs every time. Rate limiting only catches the unsophisticated.

What actually works is identity context at the moment of signup. Specifically:

-

Is this email domain freshly registered, or is it a domain you have seen before?

-

How many other accounts trace back to this device fingerprint?

-

What is the network class of this IP — residential, datacenter, VPN, mobile carrier?

-

How does this session's behavior cluster with prior fraudulent signups?

None of these signals individually proves anything. Together they make fraud impossible to hide. The 650-account guy was invisible to every individual signal. He was screaming at the device fingerprint layer.

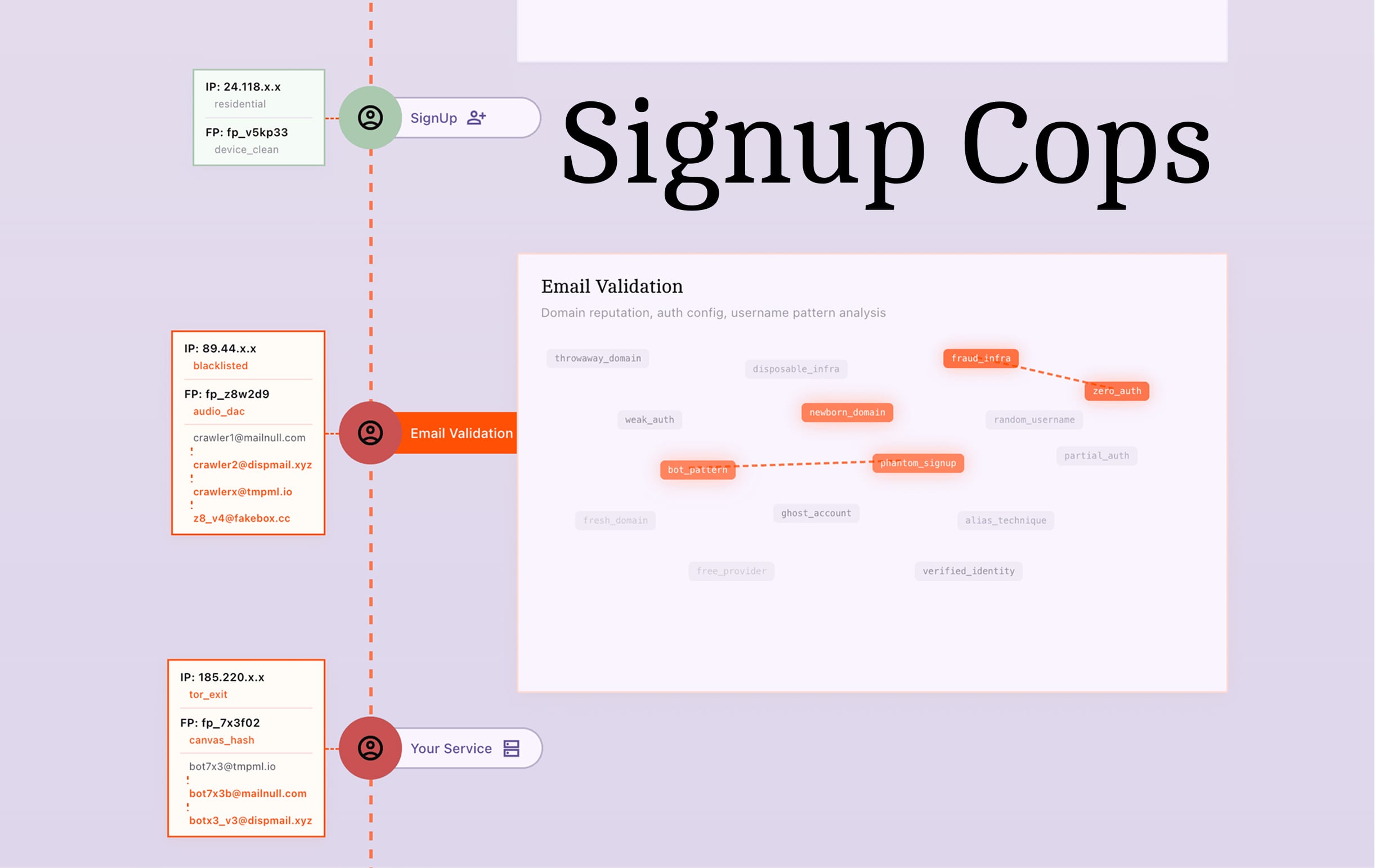

What I built: SignupCops

Once I had the behavioral data, the product shape was obvious. SignupCops sits on top of the DataCops fingerprinting infrastructure.

The thesis: don't gate. Don't decide for the application. Just hand the application the full identity context per signup, and let the application decide what to do. Block, step-up verify, welcome back to existing account, silently flag for manual review, whatever the UX wants.

Integration is honestly the easy part.

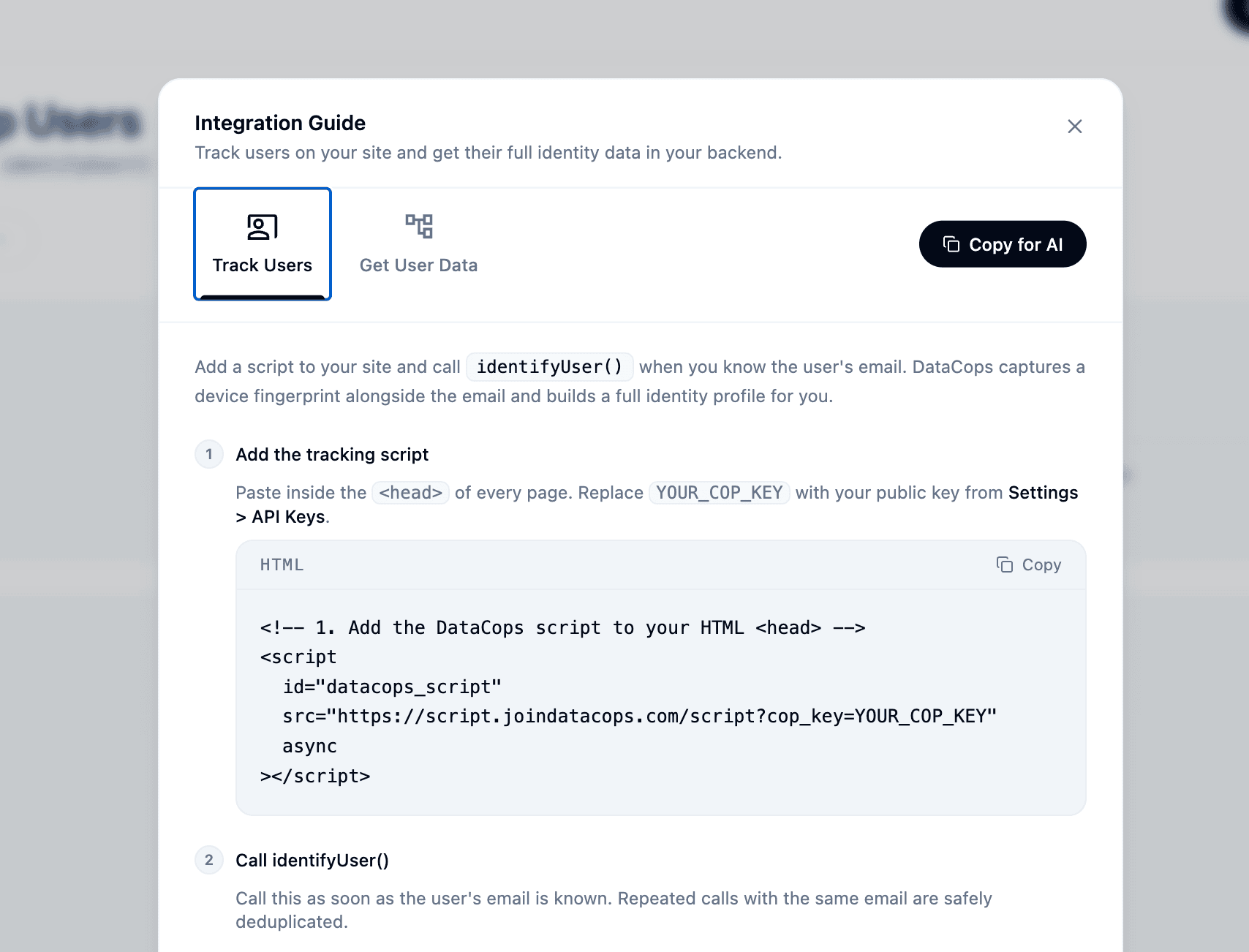

You add the DataCops script to your site. Inside the DataCops dashboard you'll find an Integration Guide that looks like this:

📷 [SCREENSHOT 3: DataCops Integration Guide modal — "Track Users" tab selected, "Copy for AI" button visible]

Hit "Copy for AI." Paste it into Claude or Cursor. Describe what you want your signup logic to do.

That's it.

You get back the full identity picture per signup: risk score, IP class, email domain reputation, device fingerprint hash, the count of other accounts already linked to that fingerprint, and the related emails on that device. Your AI writes the gating code for your stack against your business rules. Block disposable, step-up verify above 75 risk, welcome back to primary account if linked, throw away outright, route to manual review — whatever makes sense for your UX.

No CAPTCHA. No vendor risk-score black box. No one-size-fits-all rule engine. You control the gate. We just hand you the keys.

The check takes under 200ms. Real users do not notice. The 650-account guy gets caught at signup attempt #6.

What I want you to take from this

DataCops is live as of today. SignupCops is the first product I am shipping out of this research.

If you are running anything with a free tier — credits, trial, freemium, gated content — you have this problem and you probably do not know how bad it is. Every metric that would surface it (CAC, activation rate, deliverability, ROAS) has plausible alternative explanations. You will rationalize it as "weak onboarding" or "wrong audience" for months before you check the actual signup data.

You can audit it tonight without any tool. Pull your last 1,000 signups. Sort by email domain. Count how many domains you have never heard of. If it's more than 10%, you know.

The gate is wide open. The bots and the humans both know it.

Sometimes you have to build the honeypot and let the bears in.

joindatacops.com/signup-cops — public today. Free tier covers a few thousand checks per month.

PillarlabAI is still running. Real customers, real Stripe charges, real prediction-market analytics. It just also happens to be the most instrumented signup funnel I've ever built.